CanOpener Studio vs. Waves Nx

The Goodhertz mixing desk

In the Goodhertz audio lab in California, Goodhertz founder Devin Kerr does the majority of his critical listening at the mixing desk.

In August of 2012, after months of working on CanOpener Studio, and a lifetime of listening to music mostly on headphones, I got my first opportunity to visit the lab and hear music the way Devin does, at that desk, on a pair of professional-grade speakers — a highly controlled acoustical environment, tuned for his professional tasks (mixing, mastering, algorithm-designing).

My first thought: This sounds amazing. But a second thought followed the first one closely: This sounds a lot like CanOpener. CanOpener Studio had captured the spatial characteristics of a great loudspeaker setup incredibly well.

Flash forward to this January, to the 2016 NAMM Conference, where Devin and I got the chance to try out Waves’ newest plugin, Nx — Virtual Mix over Headphones. Under the watchful gaze of a Waves technician, I put on their headphones and pressed play.

First thought when trying out Nx? This doesn’t sound anything like the lab speakers or CanOpener Studio.

What makes CanOpener work?

Hearing music on headphones is a lot different from hearing music on speakers. In our lab, in the mixer’s chair, sounds from the left and right speakers hit both of your ears. So even when Ringo’s drums are coming only from the left speaker, those sound waves still make it to your right ear. But it’s no simple level adjustment. The waves interacted with a large obstacle (you) on the way to your right ear, meaning your right ear hears those left-speaker sounds modified in a thousand ways — changes in phase, spectrum, and intensity.

Headphones, by contrast, are loudspeakers strapped to your ears. No chance for Ringo to make it from left to right.1

Unless, after popping on a pair of headphones, you pop CanOpener Studio on the master bus. Using crossfeed, CanOpener models the most important spectral, timbral, and intensity modifications that you’d hear in our studio. Which is nice when listening to music, but essential when making critical panning and spatial decisions about sound. That is, when mixing or mastering.

Where does Nx go wrong?

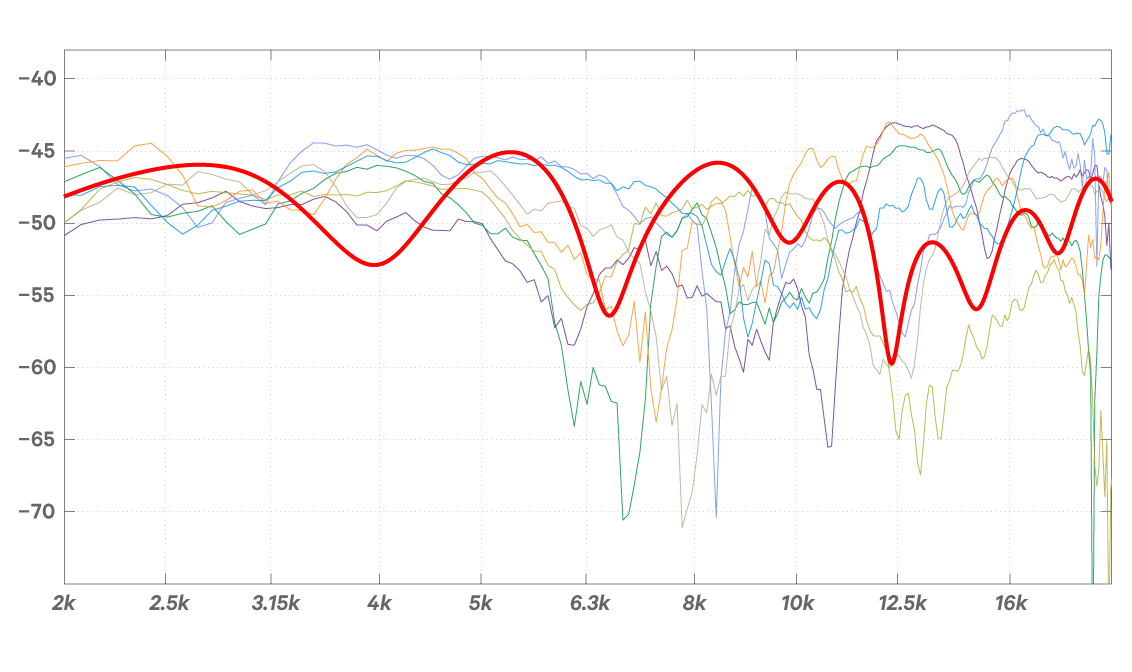

Unlike CanOpener Studio, which incorporates broad characteristics of loudspeakers for better headphone monitoring, Nx wants to simulate a 3D virtual loudspeaker experience. At first glance this might seem like a positive enhancement, but after listening tests and deeper examination, it’s clear that the “Virtual Mix Room” comes at the expense of accuracy and fidelity to the source. Attempting to create a 360º illusion means that Nx must drastically alter your signal’s frequency spectrum.

In real life, spectral cues are the only way we’re able to distinguish between sounds that are in front or behind us. The problem is that these spectral cues differ greatly from person to person, so it’s very easy to get them wrong.

For almost every individual plotted above, the error compared to Nx is more than 25 dB. What does a 25 dB frequency error sound like? Like pretty massive hole in the frequency spectrum — far from natural. When Waves introduced Nx they put out a press release claiming that “Nx does all this without coloring your sound in any way.” But a 25 dB error in a critical frequency band is the very definition of coloring your sound.

In Waves’ defense, Nx does make an effort to account for your ears’ variability by allowing you to input your own head dimensions. Unfortunately, head size alone doesn’t even come close to accounting for the myriad differences that make your ears sound like your ears. A recent paper on the subject suggested a minimum of eight different anthropometric measurements to improve simulation accuracy.2

Is the future 3D?

A 3D listening experience is arguably very important for virtual reality, video games, and surround sound mixing over headphones. But will a 3D experience it make your stereo mixes better? Probably not. And is it worth a 25 dB frequency error in the wrong spot? Again, probably not.

Someday, it will likely be possible to accurately model the way you hear 3D sounds in space using headphones, but it will certainly require more than a simple measurement of your head’s circumference to do it convincingly. CanOpener makes working with headphones better, and it does it without creating more problems than it solves.

Crossfeed everywhere!

Unfortunately, even though most music today is heard on headphones, crossfeed is hard to come by in mainstream music listening apps. Beyond a few presets on lackluster equalizers, almost no major music apps offer any tools for customizing sound, let alone tools to make headphones sound better. (Maybe you happen to build a music listening app and are interested in changing that? Send us an email at licensing@goodhertz.com to find out more about our licensing Goodhertz algorithms, including CanOpener Studio.)

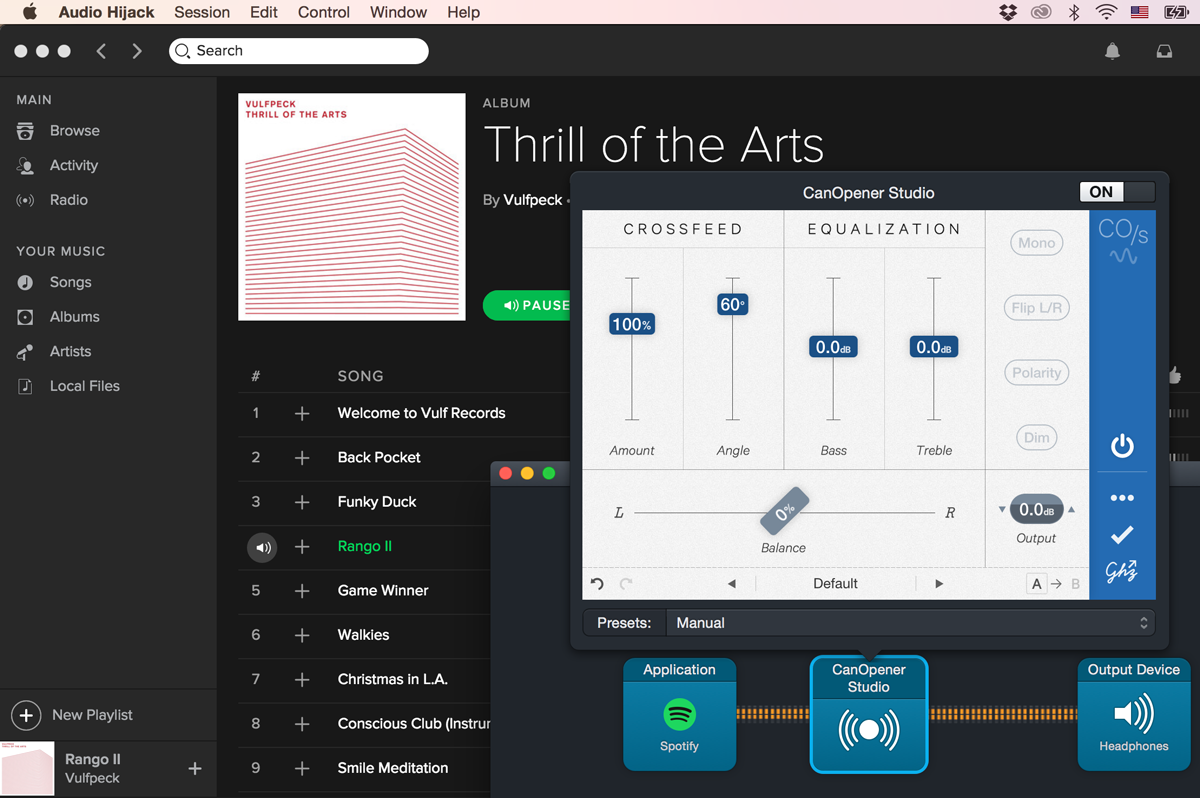

In the meantime, here’s a tip for making your headphones — no matter the price point — sound a lot better: grab a 15-day free trial of CanOpener Studio and a copy of the excellent Audio Hijack, then pop open your favorite music app, like so:

-

This is assuming that your headphones don’t have significant crosstalk between left & right channels, either electronic or mechanical (through the headphone’s band). Generally, though, for headphones that aren’t broken, the isolation between left and right channels is greater than 35 dB. ↩

-

Hugeng, Wahidin Wahab, and Dadang Gunawan. “The Effectiveness of Chosen Partial Anthropometric Measurements in Individualizing Head-Related Transfer Functions on Median Plane.” ITB Journal of Information and Communication Technology. ITB J. ICT, Vol. 5, No. 1 (2011): 35-56. ↩